August 2021 – Volume 25, Number 2

| Title | Say It: English Pronunciation |

| Author | Phona |

| Contact Information | info |

| Type of Product | Smart device app software |

| Hardware Requirements | Smart-device (phone or tablet) and access to Internet connection |

| Operating System | Android and iOS |

| Registration | None |

| Price | Free trial version. Full premium version available for $15.99/month (Android); $6.99 access to American English words (one-time), plus other in-app purchase (iOS) |

Pronunciation training for non-native speakers of English is an important yet overlooked aspect of English education, as learners of English need to communicate using intelligible pronunciation in their daily interactions. Recent pronunciation analysis studies have found that pronunciation instruction should be tailored to the individual learner, as nearly every speaker has differing pronunciation needs (Munro, 2018). While individualized pronunciation instruction poses challenges for traditional classroom settings, one solution is to employ the use of educational apps with multimedia features. Studies have found that systems using visual feedback displays can provide effective pronunciation feedback (e.g., Hardison, 2004; Ramírez Verdugo, 2006), which increases student autonomy and helps teachers make better use of class time (McCrocklin, 2016). One tool created to support pronunciation training is Say It: English Pronunciation Made Easy, an informative app which aims to improve the pronunciation, speaking, and listening skills of English language learners at all levels.

General Description

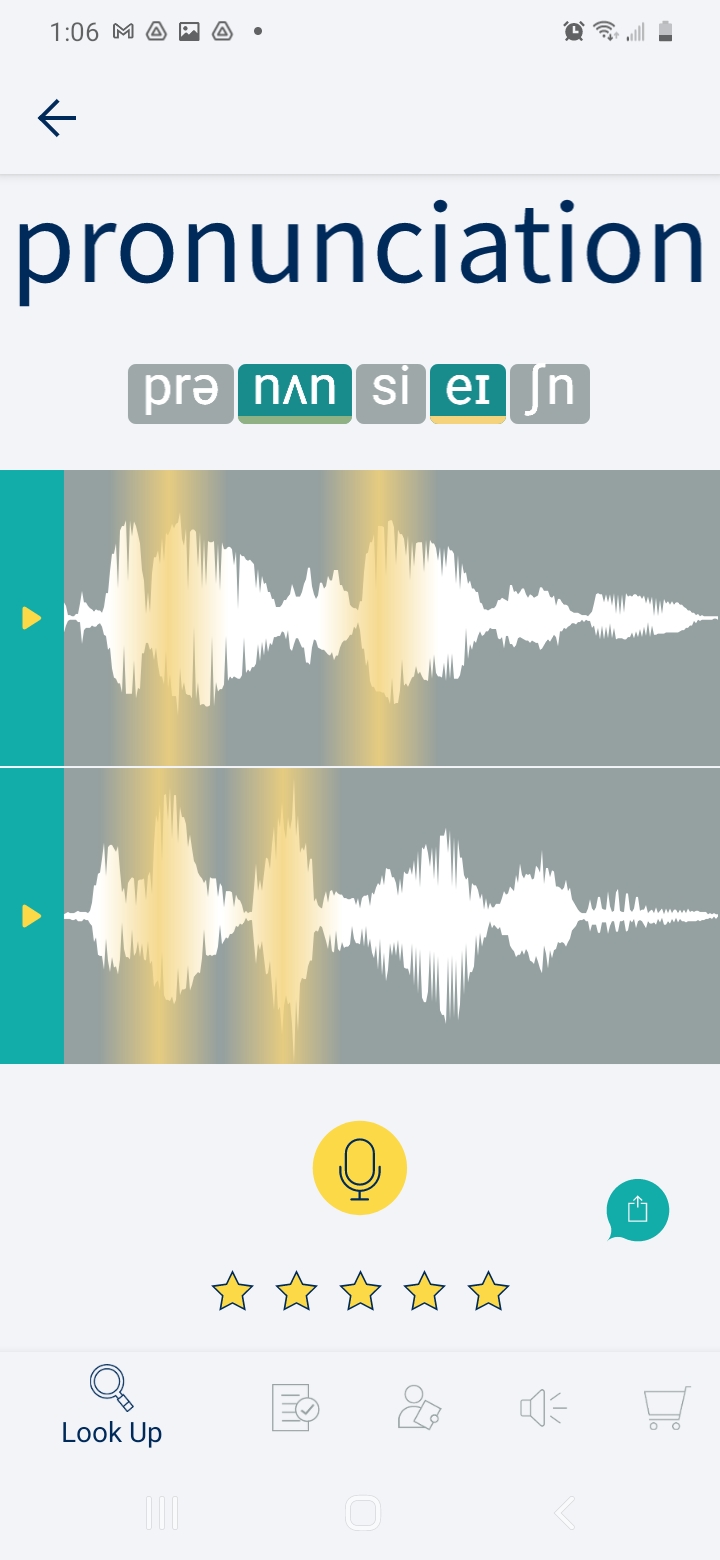

Say It contains a number of activities and features: Look Up, Tests, My Word, and Sounds. The first feature, Look Up, allows the user to search for a word in the dictionary, see its phonetic spelling, listen to its pronunciation in either Standard American English or British English, and see a waveform of that pronunciation. This waveform (see Figure 1) displays the intensity and duration of each part of the sound. To slow down the recording, the user can drag their finger across the waveform, allowing them to identify which section of the waveform is associated with each sound.

The phonetic transcription is displayed above the waveform in syllables. When the user taps on a syllable, the constituent sounds of that syllable are played individually. The syllable receiving primary stress is shown in green and underlined in yellow. Similarly, in the soundwave, the primary stress is highlighted in yellow.

Before or after listening to the native speaker’s pronunciation of the word, the user may record their own pronunciation by tapping the yellow microphone icon. A visual representation of their recording will then appear below the model waveform. After comparing their production to the model, the user can give their pronunciation a score out of five stars, and users may re-record the word an unlimited number of times. After recording, users can also share their recordings with others via email.

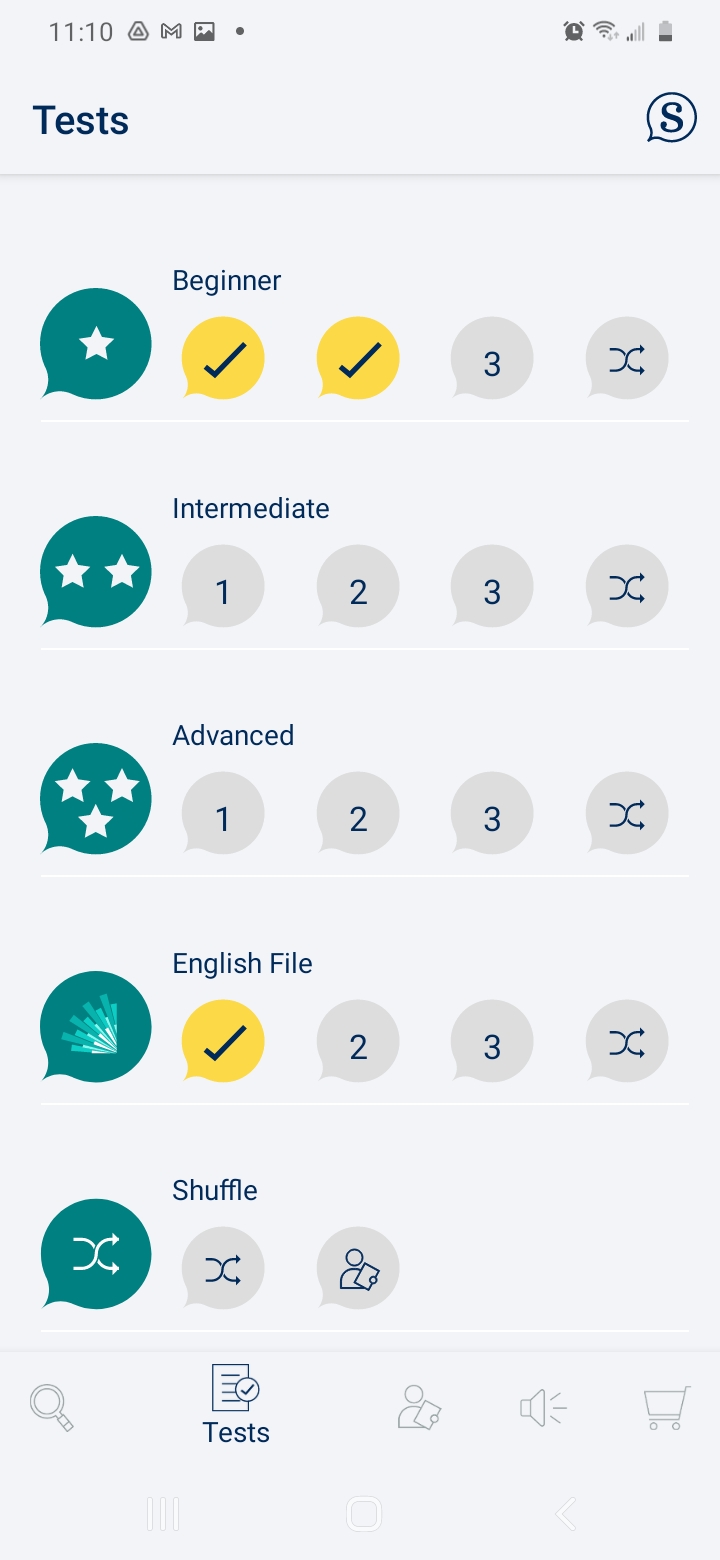

In the second feature, Tests, each module contains ten items. In these, the user first records their own pronunciation of the word presented without hearing the model speech. After recording, users can see the waveform and hear the audio recording produced by the model, and score their productions by making comparisons. Tests are offered at beginning, intermediate, and advanced level, and contain items which differ in their frequency rates (Browne et al., 2013) and average number of syllables. A similar English File test (see Figure 2) features vocabulary from Oxford’s series of the same name (Latham-Koenig, et al., 2013).

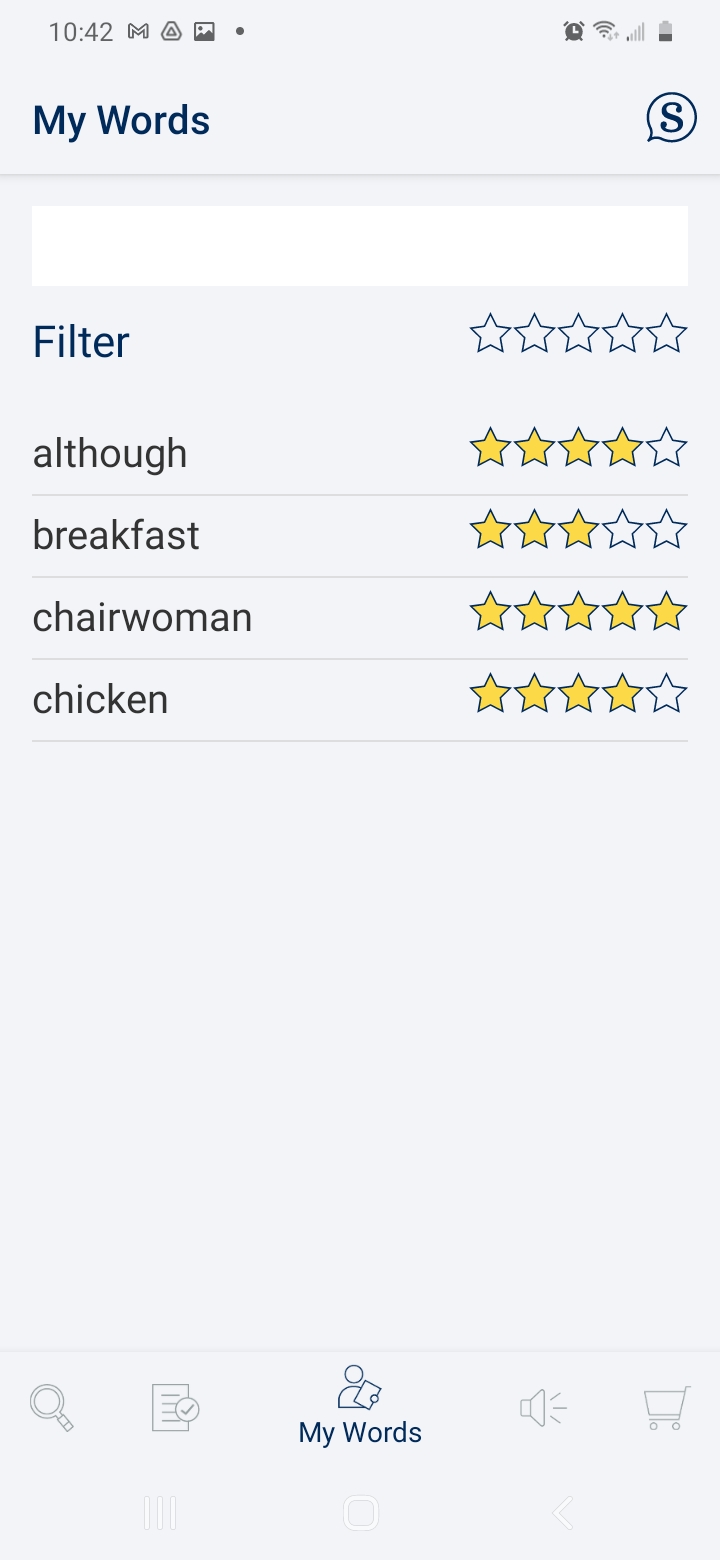

The third feature, My Words, maintains a searchable list of the words the user has practiced previously (see Figure 3). It allows the user to search for words which they previously rated by applying a filter of one, two, three, or more stars.

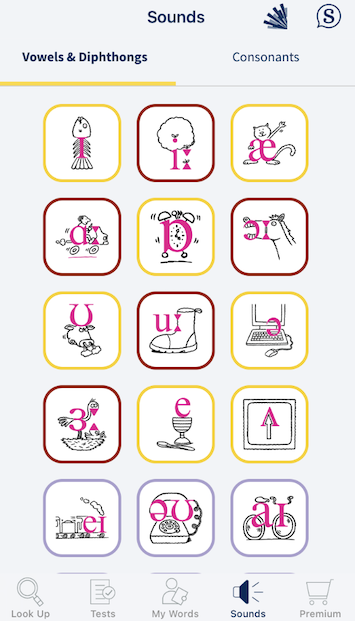

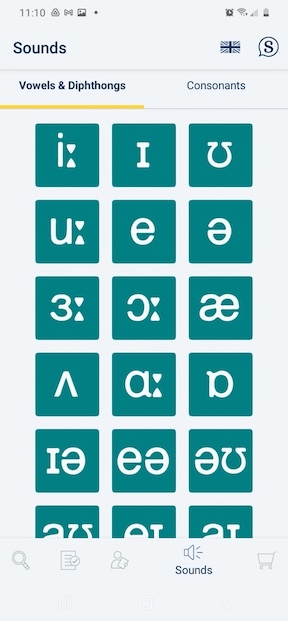

The fourth feature, Sounds, displays an IPA chart (see Figures 4a and 4b), which differs for iOS and Android users. When a user clicks on the symbol, the sound plays. On the iOS version, an in-app purchase is required to access the entire chart; iOS users may also purchase additional words found in the Oxford textbook series English File (Latham-Koenig et. al, 2013), which offers contextualization through sample words and accompanying illustrations.

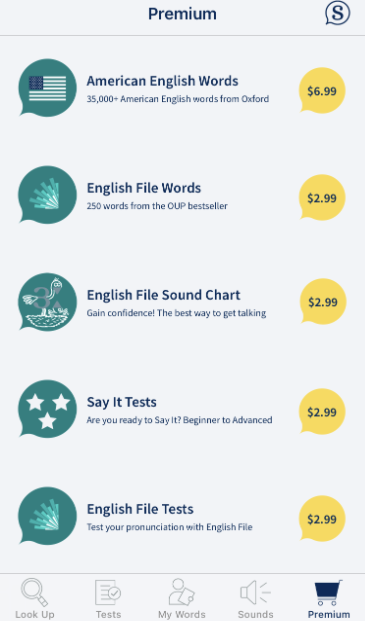

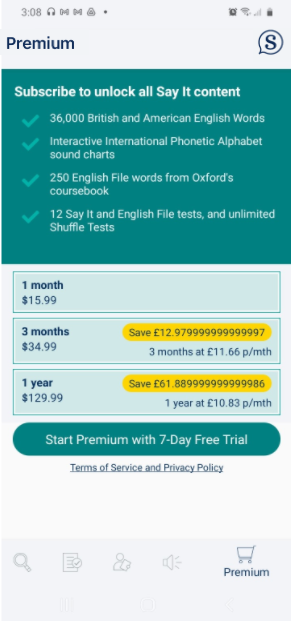

One important note is the differing pay schemes for the Android and iOS versions of this app (see Figures 5a and 5b). While Android charges $15.99/month for use of the app, the iOS version charges a one-time fee of $6.99 for the American English words and has other in-app purchases for other features, such as access to a sound chart and additional tests.

Finally, the app can be used with a Smartboard and speakers in a classroom, so that the whole class can see and hear the display, although it is not yet compatible with Bluetooth technology. Some advice for learners and teachers on how to use the app is provided in the Frequently Asked Questions section of the app and Teaching Tips (Oxford University Press, 2013).

Evaluation

In order to effectively evaluate the strengths and weaknesses of this mobile app, Reinders & Pegrum’s (2016) evaluative framework for mobile language learning resources was utilized. This framework is broken down into the following categories: educational affordances (different accounts of learning, i.e., local and global, episodic and extended, and personal and social), general pedagogical design (i.e., traditional or modern approaches to learning), L2 pedagogical design (i.e., communicative approach, intercultural learning), SLA design (i.e., comprehensible input and output, negotiation for meaning, and feedback), and affective design (the user’s engagement and affective filters).

In terms of educational affordances, the support for individualized, autonomous learning that Say It provides is among its greatest strengths. The app facilitates episodic learning in manageable chunks (Pegrum, 2014), which could be as small as practicing a single syllable. Similarly, the My Words list encourages learners to revisit items that proved challenging, facilitating extended learning. By allowing users to search for the words that best fit their needs, rather than imposing a one-size-fits-all series of lessons, Say It facilitates personal learning, allowing users to manage their own learning experience (van Harmelen, 2006). Furthermore, mobile device apps allow for episodic learning to occur anytime, anyplace.

Regarding the app’s general pedagogical design, the pedagogy incorporated into the app uses the audiolingual method, which may be productive with beginners, facilitating noticing and improving the quality of subsequent production when students may otherwise have few resources in the target language (Yu, 2009). This method, however, may prove limiting to advanced learners who may need more situated and student-centered activities. The only opportunity for collaboration may lie in the app’s file sharing capabilities. In addition, the app designers provide some suggestions on how to incorporate Say It into a larger pedagogical plan to support reading, listening, and speaking activities. For example, Teaching Tips suggests that students prepare a five-minute pitch to convince others to invest in their product, identifying and practicing ten words using Say It before their presentation (Oxford University Press, 2013), which supports autonomous learning.

Considering L2 pedagogical design, there is little immediate connection between the app and the communicative learning or intercultural learning, as Say It does not contextualize learning, directly facilitate interactions, or provide any cultural learning relevant to the words being practiced. While the Teaching Tips provided do show how the app could be incorporated into more communicative activities, there is nothing inherent in the app that allows this type of learning to take place. In terms of task-based learning, the app does little to connect learning to real-life goals. Addressing word meaning could be a feasible first step in that direction, considering the app’s connection to Oxford dictionaries.

Regarding SLA design, Say It’s greatest strength may be in terms of comprehensible input, in that the user can hear the input multiple times and at the speed which is most beneficial. Say It also takes advantage of input devices and recording abilities (Dennen & Hao, 2014), which promotes noticing of language features (Kukulska-Hulme & Bull, 2009). In terms of weaknesses, perhaps the greatest shortcoming lies in the lack of feedback regarding whether the learner’s production matches the model. Learners would benefit from more guidance in using the visual display to determine whether their productions are satisfactory (Ramírez Verdugo, 2006). Similarly, the app does not automatically align the beginning of the user’s speech with the beginning of the model’s production. This lack of alignment makes direct comparisons between the two recordings challenging, even if the user attempts to correct for this by speaking the moment they begin to record.

Finally, looking at affective design principles, use of the app would likely lower affective filters and increase engagement due to its sleek and colorful design, as well as its users being able to practice wherever they learn best. Conversely, in interactions with a live instructor in class, students may wish to avoid embarrassment which could impact their willingness to speak (Edge et al., 2011). However, if the app were used in class as recommended, teachers may find it challenging to help multiple students navigate the differences which exist for iOS and Android users, particularly the divergent pay schemes, especially if the higher initial cost for Android users causes frustration (see Figures 5a and 5b). While the app is generally easy to use, students may additionally find the lack of alignment between the beginning of the learner’s production and that of the native speaker to be troublesome.

Conclusion

Overall, Say It supports autonomous learning and helps learners improve their listening, pronunciation, and speech through the comprehensible audio and visual input it provides. Although Say It would benefit from additional user training materials, the current app seems appropriate for both individual and class-based instruction. For Say It to be useful to students on a continuing basis, however, in-app purchases must be made to allow for access to a sufficiently wide range of words. For some users, especially those with Android devices, the price tag may represent a significant barrier, and the difference in payment schemes may prove unfair to some learners in classroom settings. Nevertheless, Say It represents a valuable addition to the pronunciation community, putting some of the affordances of visual feedback displays in a format accessible to language learners.

References

Browne, C., Culligan, B. & Phillips, J. (2013). The New General Service List. Retrieved from http://www.newgeneralservicelist.org

Dennen, V., & Hao, S. (2014). Paradigms of use, learning theory, and app design. In C. Miller & A. Doering (Eds.), The new landscape of mobile learning: Redesigning education in an app-based world (pp. 21-41). Routledge.

Edge, D., Searle, E., Chiu, K., Zhao, J., & Landay, J. A. (2011). MicroMandarin: Mobile language learning in context. In D. Tan, G. Fitzpatrick, C. Gutwin, B. Begole & W. A. Kellog (Eds.), Proceedings of the SIGCHI Conference on Human Factors in Computing Systems (pp. 3169-3178). ACM Press.

Kukulska-Hulme, A., & Bull, S. (2009). Theory-based support for mobile language learning: Noticing and recording. International Journal of Interactive Mobile Technologies, 3(2), 12-18.

Hardison, D. M. (2004). Generalization of computer assisted prosody training: Quantitative and qualitative findings. Language Learning & Technology, 8(1), 34-52.

Latham-Koenig, C., Oxenden, C., & Seligson, P. (2013). English File (2nd ed.). Oxford University Press.

McCrocklin, S. M. (2016). Pronunciation learner autonomy: The potential of automatic speech recognition. System, 57, 25–42.

Munro, M. (2018). How well can we predict second language learners’ pronunciation difficulties? CATESOL Journal, 30(1), 267-281.

Oxford University Press. (2013). Say It teaching tips [Leaflet]. http://elt-cap-webspace.s3.amazonaws.com/say_it/say_it_teaching_tips.pdf

Pegrum, M. (2014). Mobile learning: Languages, literacies and cultures. Palgrave Macmillan.

Ramírez Verdugo, D. (2006). A study of intonation awareness and learning in non-native speakers of English. Language Awareness, 15(3), 141-159.

Reinders, H. & Pegrum, M. (2016). Supporting language learning on the move: An evaluative framework for mobile language learning resources. In B. Tomlinson (Ed.), SLA research and materials development for language learning (pp. 221-233). Taylor & Francis. https://doi.org/10.4324/9781315749082

Van Harmelen, M. (2006). Personal learning environments. In Kinshuk, R. Koper, P. Kommers, P. Kirschner, D. G. Sampson, & W. Didderen (Eds.), Proceedings of the Sixth International Conference on Advanced Learning Technologies (pp. 815-816). IEEE Computer Society.

Yu, X. (2009). A formal criterion for identifying lexical phrases: Implication from a classroom experiment. System, 37(4), 689-699.

About the Reviewers

Jeanne Beck is a third-year Applied Linguistics and Technology Ph.D. student at Iowa State University. She has taught in both rural and urban K-12 and higher education contexts in the United States, Japan, and South Korea. Her interests include second language assessment, CALL, technology training for teachers, and EL policy. <beckje![]() iastate.edu>

iastate.edu>

Andrea Flinn is a third-year Applied Linguistics and Technology Ph.D. student at Iowa State University. She gained two years of experience incorporating mobile-assisted language learning (MALL) into pronunciation instruction at San Francisco State University, where she earned her MA-TESL. Suprasegmentals and word stress both play key roles in her instruction. <flinn![]() iastate.edu>

iastate.edu>

| © Copyright rests with authors. Please cite TESL-EJ appropriately.Editor’s Note: The HTML version contains no page numbers. Please use the PDF version of this article for citations. |