February 2023 – Volume 26, Number 4

https://doi.org/10.55593/ej.26104a7

Surya Subrahmanyam Vellanki

University of Technology and Applied Sciences, Oman

<surya.vellanki![]() nct.edu.om>

nct.edu.om>

Saadat Mond

University of Technology and Applied Sciences, Oman

<saadat.mond![]() nct.edu.om>

nct.edu.om>

Zahid Kamran Khan

University of Technology and Applied Sciences, Oman

<zahid.khan![]() nct.edu.om>

nct.edu.om>

Abstract

The hasty adoption of remote teaching (RT) by educational institutes in response to the COVID-19 pandemic has drastically impacted teaching, learning, and assessment. With most institutes unprepared for sudden lockdowns, and many educators lacking online teaching and assessment experience, old methods of assessment continued to be used, at least initially. When concerns about academic integrity soon followed, new pedagogical concepts and modes were trialed in the hopes of addressing perceived inadequacies. This study, using a mixed-method design, investigates teacher-participants’ perceptions of online assessment and academic integrity. It explores the challenges they believed had a detrimental effect on the latter – technical difficulties, problems due to lack of physical presence, student behavioral issues, and concerns about assessment design and process. This study also discusses teacher-participants’ suggested approaches to safeguarding against dishonesty, including modifications in design, conduct, and evaluation of quizzes and exams. Finally, it ends with teacher-participants’ recommendations and suggestions for policy-level changes to help minimize the adverse effects of prolonged RT.

Keywords: Academic integrity, formative assessment, internet/web-based/online/remote assessment, ipsative assessment, performance-based assessment, summative assessment

Although it has been over two years since the start of the pandemic, students, teachers, administrators, and governments in many countries appear to have had little respite from its consequences. Some schools, colleges, and universities closed for more than a year as an immediate reaction. A few opened with limited student attendance, and others chose to continue remote teaching for longer. As COVID-19 is unlikely to simply disappear in the foreseeable future, it seems likely that repercussions will continue to be felt for some time.

The onset of COVID-19 brought sudden and unexpected changes to teaching and learning. Almost everything went online on a previously unimaginable scale, largely untested, and mostly according to trial and error (Burgess & Sievertsen, 2020). It proved revolutionary for both educators and students all over the world as both were compelled to move from traditional to remote teaching without required preparedness (Abduh, 2021).

In the initial stages of the implementation of RT, confusion reigned; there were few or no clear policies and guidelines in many, if not most, higher education institutions (HEIs). Programs were modified and adapted in a rush, and questions about what to teach, how to teach, what new duties teachers should have, what an appropriate workload is, what the new teaching environment looks like, how online assessment should be handled, etc., were not clear to most stakeholders. There were other problems too, including poor or absent infrastructure, teachers’ and students’ lack of experience in online education, changes in work schedules, and the inconvenience of working and studying from home.

Despite all the problems mentioned above, one positive aspect noted by teachers was how well they were able to work with administrators to bring new technology to help engage students and augment the learning process. As remote teaching and learning plans were implemented, teachers curated a new array of online resources to facilitate learning and connected with students via new online platforms such as Zoom, MS Teams, and Google Meet. Tried and trusted learning management systems (LMSs) like Moodle, Google Classroom, Blackboard, etc., were generally retained. There were many issues during remote teaching, however, with assessment being a particularly challenging area because it largely came down to trial and error. Before we discuss different remote assessment practices, let us first understand what assessment is and what assessment procedures many teachers were following prior to the emergence of COVID-19.

Understanding Assessment

Assessment, according to the Longman Dictionary of Language Teaching and Applied Linguistics (Richards, J. C., & Schmidt, R. W., 2010, p. 25-36), is “a systematic approach to collecting information and making inferences about the ability of a student or the quality or success of a teaching course on the basis of various sources of evidence.” Assessment is an essential feedback mechanism to understand what a student has learned, and educators may use a wide range of assessments in their classes. Summative assessment, for example, is used at the end of a course to judge to what degree students have achieved expected learning outcomes and acquired an understanding of some specific teaching content. Usually, high-stakes examinations are part of summative assessments, the results of which may see students promoted to more advanced levels of study or permitted to graduate altogether. Formative assessment, on the other hand, helps monitor students’ understanding and progress via formal quizzes and assignments, and/or informal procedures such as in-class activities, presentations, peer evaluation, etc. Teachers provide students with various types of feedback including written, audio, or video feedback during the formative assessment stage (Johnson & Cooke, 2016). Students may also be encouraged to self-assess to see how their learning is progressing. Formative assessment is used to manage instruction and learning and is employed to adjust teaching practices by modifying learning activities to improve student achievement (Looney, 2005; Baleni, 2015). In addition, its function, as described by Black and Wiliam (2009), is to enhance students’ progress as teachers elicit and interpret the evidence about student achievement to modify their instruction for the better. In most cases, it contributes to the final grades of students in their courses.

Assessment Before COVID-19

Prior to COVID-19 disrupting educational processes, HEIs usually followed a variety of assessment methods based on their academic policies. They carried out a formative assessment in the classroom to monitor students’ progress through formal procedures such as conducting quizzes and periodic written assignments, LMS assignments, e-portfolios, in-class presentations, one-to-one speaking, and pair or group tasks. Informal procedures included student participation in classes, peer evaluation, etc. Students were generally provided with various types of feedback during formative assessment. Of course, terminal examinations and individual or group projects and presentations were typically part of summative assessment. Where HEI policy now stipulates the use of environment-friendly methods, e-portfolios have replaced hard copy portfolios and LMS assignments have replaced paper assignments. Most of the traditional assessment methods involving hard copies were arguably more secure and reliable as they were strictly invigilated by teachers and, therefore academic integrity could be maintained to a large extent. Platforms like Turnitin were used to detect student plagiarism by some institutions, nevertheless one could not rule out the possibility of some kind of plagiarism in those forms of assessment that were not directly observed or invigilated. For example, there were occasions where teachers might have felt that a few students plagiarized homework assignments; it was empirically observed that some students occasionally tended to flout the concept of academic integrity.

Remote Assessment Methods and Issues During COVID-19

Online assessment, as defined in JISC (2007, p.6), uses “the end-to-end electronic assessment process where ICT is used for the presentation of assessment activity, and the recording of responses.”

As HEIs shifted to remote/online teaching, educators faced unprecedented challenges in assessment. For example, many institutions used existing student cumulative assessment records and canceled formative assessments, or forced teachers to finish their syllabus through adapted online teaching as soon as possible and conduct examinations in a short period of time with “an inferior alternative” (Burgess & Sievertsen, 2020) testing method – online assessment. The sudden change posed a real problem in upholding academic integrity. The seriousness of the issue can be understood from the fact that organizations such as Cambridge (CIE, AICE, etc.), SAT, British Council, etc. temporarily suspended holding examinations. Unfortunately, in higher education this was deemed an unviable solution, so most HEIs did not do the same.

Many institutions revisited their strategies and tried different platforms for both formative and summative assessment in order to understand the strengths and weaknesses of each and choose the most appropriate based on their needs and resources. Most online learning and assessment platforms such as Moodle, Blackboard, etc. allow teachers to organize their courses, interact with students, integrate different web-based tools (such as h5p, Bookwidgets, etc.), use communication tools (for e.g., a forum), and conduct quizzes. Through such asynchronous/synchronous learning platforms, teachers give assignments and quizzes to students which are used to grade, track participation, monitor progress, etc. Students can work at their own pace to read uploaded materials, do assigned quizzes, and communicate with teachers for further clarification. Despite following various remote teaching/learning/ assessment practices, many educational institutions have been constantly modifying their policies and guidelines related to teaching, learning, and assessment even after two years because of various issues.

The main difference between the assessment of students in traditional classrooms and remote teaching is their physical presence, which remains the biggest challenge to be dealt with by educators in safeguarding the standards of education. Lack of students’ physical presence and their interaction with teachers leaves fewer options for teachers to assess their students (Abduh, 2021). Before educators resorted to remote teaching, formative assessment, to a large extent, depended on students’ physical presence in classrooms whereby students’ daily progress was observed in classrooms through their responses in discussions, their body language, their way of answering teachers’ questions, etc. This greatly helped teachers in formative assessment and generally provided a reliable means of ensuring academic integrity. Due to the nature of remote assessment, concerns are on the rise about the issue of academic integrity owing to issues of student malpractice.

A common abuse observed during online examinations was students submitting plagiarized material, which is the use of the ideas, content, or structures of others without appropriately acknowledging their source (Fishman, 2009), further expanded by Foltýnek, Meuschke, & Gipp, (2020) to include self-plagiarism, unintentional plagiarism, and plagiarism with the original author’s consent. Students were found copying completely or partially from other students’ previously submitted assignments, partially rewording text by changing grammar structures or vocabulary, and using online paraphrasing services. Another method of cheating involved translating an original work into another language with the goal of hiding the source (Sakamoto & Tsuda, 2019; Roostaee, Sadreddini, & Fakhrahmad, 2020). It is very difficult even for anti-plagiarism software to detect plagiarized content in the final product.

Essays, reports, and presentations are used by many teachers all over the world as a fairly reliable means of ascertaining whether students have achieved the outcomes of a particular course. However, many teachers express concerns with remote assessment, particularly when student works are submitted suspiciously free of errors and, in many cases, contain a great deal more content than they would if written under face-to-face classroom conditions. One reason is that “many students simply do not grasp that using words they did not write is a serious misdeed” (Gabriel, 2010). Unrestrained and indiscriminate use of online information has clearly blurred plagiarism boundaries for students (Gabriel, 2010).

Another issue highlighted by many researchers is ‘contract cheating’ in which a student uses the help of a third party (sourced through social media platforms such as Instagram, Facebook, etc.) to complete their coursework – which is then submitted for grading to teachers as their own work. These third-party writers are ‘essay mills’ (Gamage, Silva, & Gunawardhana, 2020, p.5). They offer a range of services that may involve payment or other favors, called commissioning (QAA, 2017). Hodgkinson, Curtis, MacAlister, & Farrell (2015) mention that such acts of cheating may not always be a result of students’ laziness, but an ingenious effort to fool the system of examinations or the educational institution/organization since they have found out some loopholes in the system and exploiting them to their advantage.

Abduh (2021) found in her research that some students consider online examinations a chance to improve their grades through cheating. It is certainly not an exaggeration to say that the results of students during the COVID-19 period were much better than those before the pandemic. The main reason for this was the lack of proper monitoring mechanisms in both test preparation and in procedures followed during remote assessment as face-to-face invigilation was obviously not a viable option.

Another challenge encountered was internet connectivity issues. Abduh (2021) mentioned that not only do teachers find rectifying problems exhausting, but spasmodic disruption also hinders the smooth flow of assessment. A study by Yulianto and Mujtahin (2021) revealed that teachers had negative experiences with online assessments during the COVID-19 pandemic, especially because of weak internet connections, lack of enthusiasm among students, and doubtful validity of the assessments themselves.

Regardless of these challenges, one of the main goals of teachers is to find out how much students have learnt or how much learning is taking place in online classes. Mindful teachers would like to know if students have learnt as much as they would have in a traditional classroom situation, and a proper assessment of students’ learning offers the most accurate picture. Many think about what objectives they can set or what adjustments they should make to teach or assess students in remote teaching situations, and what worries some is the disparity they see between classroom performance and test performance. Moreover, there is no guarantee that either teaching or examinations are likely to be in traditional classrooms, not at least in the near future. Hence, it is imperative to find ways to ensure that online assessment is as reliable, authentic, and valid as possible. Beckman, Lam, &Khare (2017) believe that proper implementation of online learning assessments can make assessments more reliable and assist students in achieving their desired outcomes.

Despite all the planning, preparation, and execution by different stakeholders, one cannot deny that the academic standards are being compromised in different areas and for a variety of reasons which we will look at in the following parts of this paper. Though this compromise has taken place in three different areas (teaching, learning, and assessment) this paper focuses on remote assessment.

This study explores the experiences of teachers who, in the face of COVID-19, tried to address the challenge of abrupt changes to their teaching and learning systems. This study is unique in that it explores genuine reflections of EFL teachers with first-hand experience of remote assessment, while simultaneously attempting to consolidate previous research about challenges in remote assessment of students’ learning, with special emphasis on the striking variance in students’ results across different types of tests. Teachers’ sensitivities about remote assessment are significant not just during periods of remote teaching. They will play a substantial role in the future for three reasons: a) there is no certainty as to when the academic situation will return to ‘normal’; b) most educational institutions are likely to follow a hybrid learning and remote assessment model for an indefinite period; and c) the accessibility and convenience afforded by technology might see students deliberately choose online courses instead of face-to-face ones.

This paper addresses the following research questions and provides some practical solutions to address the issues posed by remote assessment.

- What are the challenges encountered by teachers during remote assessment?

- What strategies could be adopted to deal with the challenges in remote assessment?

- How could existing assessment methods and practices be modified/used to suit a remote teaching context?

- How could alternative assessment methods and practices be used to streamline assessment in RT?

Methodology

Research Design

This study adopted a mixed-method approach by using both qualitative and quantitative data to answer the above questions. This approach presents opportunities to triangulate both forms of data collected from different sources and minimizes the limitations of both approaches. Thus, it facilitates validation of data through cross-verification from both quantitative and qualitative data and increases the validity of the research. Such an approach also highlights various perspectives as one form of data complements the other (Creswell & Plano Clark, 2011; Creswell, 2012; Creswell & Creswell, 2018).

Setting and Participants

The participants were 37 EFL teachers (20 females and 17 males) in the General Foundation Program of a university in Oman. 29 are master’s degree holders and 8 are doctoral degree holders. Barring 1 participant, all have at least 10 years of EFL/ESL teaching experience, and some have more than 20. More than half have gained their experience exclusively in Oman. All participants said that they experienced various challenges in teaching and assessing their learners during periods of remote teaching. The university uses MS Teams for instruction during remote teaching and Moodle is the LMS.

Ethical Considerations

This study is a part of an approved project by The Research Council, Oman, and all due ethical considerations for research with participants were considered including taking consent of all teacher participants during data collection and assuring them about the anonymity and confidentiality of the data.

Data Collection and Analysis

Data was collected through a questionnaire prepared by the researchers. The questionnaire contained several themes relevant to emergency remote assessment and sought both quantitative and qualitative data from teacher-participants. The quantitative part comprised multiple choice questions (MCQs). The rationale behind choosing MCQs over Likert scale was that each question category was designed to accommodate multiple options, and the respondents had the choice of selecting as many options as applicable based on their experience and understanding of remote assessment. Another consideration was to make the questionnaire thorough and manageable. As the questionnaire contained a number of categories and sub-categories related to remote assessment challenges, the chosen format enabled the researchers to accommodate responses to a spectrum of challenges and keep the length of the questionnaire manageable. The qualitative data was collected through open-ended questions which were a part of the questionnaire. In addition, notes taken during informal conversations with the participants, as proposed by Swain and Spire (2020), also served as a source of qualitative data for this study. The face validity of the questionnaire was assessed by four language experts with over 10 years of experience in teaching English. They approved the questionnaire and its layout. A pilot study, with 10 EFL lecturers, was conducted to establish the content validity of the questionnaire.

The study used purposive sampling technique to select the individuals who were experienced in and knowledgeable about the field of enquiry (Creswell & Plano Clark, 2011). This technique helped researchers find willing individuals who could reflect on their remote assessment experiences and provide valuable inputs. All respondents also have experience in assessing EFL students remotely. The questionnaire was created using Google forms and was administered online.

Quantitative data obtained from the responses on Google forms were downloaded and analyzed using MS Excel. The percentage related to each question subcategory was calculated from the data to determine the degree of each challenge (from most significant to least significant). On the other hand, qualitative data were analyzed using the thematic analysis approach proposed by Braun & Clark (2006). The analysis started with researchers getting familiarized with the data. The data was examined and coded under different themes using keywords and phrases which indicated potential patterns. Apart from certain pre-determined themes, new themes also emerged from the data (Braun & Clarke, 2006; Creswell & Creswell, 2018). The researchers continued to engage with the data to distinguish between themes and sub-themes and coded them accordingly. Any differences or ambiguities of interpretation were resolved by meticulous discussions. Finally, the highlighted data was reviewed to write observations.

Discussion and Analysis

Challenges Encountered by Teachers During Remote Assessment

The responses from the questionnaire revealed the issues they encountered during remote assessment. They can be categorized broadly as problems related to students’ lack of physical presence, technology, behavior, and the process of testing and assessment.

Lack of Physical Presence

A vast majority of teachers (96%) believed that not only was lack of physical presence the predominant challenge during RT, but it was also the root cause of other problems they faced. Participants found it particularly difficult to evaluate the effectiveness of their teaching, mainly because they could not actually see their students as easily as they could in a traditional classroom setting. Teachers reported that students “actively avoided classwork” (T2, T5, T7, T8, T15), were “reluctant to participate in online activities” (T2, T8, T13, T20), and “responded only when repeatedly prompted” (T9, T25). This affected teachers’ ability to give and receive appropriate feedback, causing them to feel they could not effectively check a student’s work, let alone evaluate understanding or progress. When students who avoided putting in effort in class subsequently scored very highly in assessment tasks, teachers began to harbor doubts about the validity of testing mechanisms.

Technical issues

Eighty-five percent of teachers reported that internet connectivity, issues related to software or hardware, and sluggish networks sometimes adversely impacted their ability to conduct remote assessments. Teachers who had to seek technical advice to sort out problems said that it was “not conducive to the smooth conduct of assessment activities” (T5, T 9, T26). They also believed that breakdowns in communication caused by “internet issues during exams sometimes resulted in poor outcomes for certain students” (T1, T3, T10).

However, teachers also suspected that some students over-exaggerated technical issues in the hopes of gaining some personal advantage. They believed that during classes and exams, for example, certain students (usually those with irregular attendance or who avoided coursework) claimed to be suffering from connectivity issues that most likely did not exist. Other problems such as using multiple or incompatible devices and sudden electricity failure were also reported by teachers as possibly dubious excuses.

Behavioral issues

Poor attitudes to learning on the part of students came up as a significant behavioral issue during remote assessment. Teachers observed that many students used phones during examinations, and often received text messages at highly inappropriate times. However, due to the limitations of videoconferencing technology they “could not easily identify the students involved or determine the contents of the information exchange” (T3, T18, T23, T29). Another behavioral issue noticed by the teachers was that during exams, some students constantly engaged them through unnecessary questioning – most likely an attempt to impede effective invigilation.

Ninety percent of respondents reported noticing students cheating through presenting obviously plagiarized work or by deliberate noncompliance with instructions during remote assessment. For example, students were asked to attend exams on time, to keep their cameras on and focused on their faces, and to keep microphones on/off depending on the requirement. However, many students flouted these rules by coming late to exams, keeping their cameras either off or unfocussed, and turning microphones on/off. This made “effective monitoring of assessment processes difficult and unreliable” (T4, T7, T32). While some students may have inadvertently broken the rules, many appeared to have done so on purpose in an attempt to gain an unfair advantage.

Issues Related to Testing and Assessment Processes

Teachers said that LMSs often made remote assessment more challenging, mainly because of how different educational institutions approach formative and summative assessment. During remote assessment, students were permitted to take tests in the comfort of their homes where they had access to classroom materials that included online and offline resources. Keeping in mind the login issues, internet connection problems, etc., student-friendly measures (such as giving students more time compared to that given in a face-to-face test/exam) were extended so that they did not feel unduly pressured during remote assessment.

One significant issue raised by a majority of respondents relates to the design and layout of tests, and another is about the nature and type of questions within the tests themselves. They generally felt that some test questions seemed to have been constructed or framed in a way that had more to do with overcoming the technical limitations of particular LMSs than with ascertaining student knowledge or ability. As a result, “questions were sometimes diluted to the extent that they no longer posed any significant challenge” (T21, T28) to students and thereby affecting the rigor of some questions. For example, a listening exam that relied on the use of a drop-down menu was designed in a way that permitted students to answer correctly without having to actually listen to the questions or perform any kind of critical thinking.

Respondents said that “the amount of time allotted to students for the online examinations”, as mentioned above, was another factor that compromised the quality of assessment because it “was incommensurate with the kind of questions asked” (T5, T11). Students were allowed some extra time to finish their exams to avoid receiving complaints about technical glitches or internet issues, but this extra time probably made it easier for students to engage in malpractice.

Regarding invigilation during online exams, around 65% of teachers said that it was often difficult to identify cheating as it happened due to limitations of technology. A major challenge, for example, was posed by the need for teachers “to monitor large numbers of student cameras using only a single screen” (T9, T25, T34). More attention was paid to making sure that students kept cameras on than on what students were actually doing. Moreover, since students were instructed to keep the cameras directed at their faces, invigilators could not see anything else. Hence, it was nearly impossible to check if the students were cheating.

Another crucial issue was that teachers could also not directly monitor what students were doing on LMSs such as Moodle. It is not possible, for example, for them to actually witness students answering test questions or writing. It is also impossible for students to share their screens only with invigilators as video conferencing platforms such as MS Teams do not have that single sharing option.

Even where there is a possibility of securing exams through certain features of LMSs (for example, using a ‘safe exam browser’), administration teams’ not resorting to such useful features undermines efforts to eliminate cheating. This disinclination could be attributed to the fact that it could prompt an influx of complaints and excuses from students which might prove impossible to handle in an environment already fraught with claims of technical issues, almost none of which can be easily verified or addressed.

Another issue that teachers faced consistently during online examinations was the common practice of students’ submitting obviously plagiarized materials. This was highlighted by often huge inconsistencies between student performance during class and performance during testing. For example, low-level users of English regularly turned in essays that were almost entirely free of errors and apparently unique, not flagged by automated plagiarism checking. The number of words that students wrote in online examinations was also a matter of concern because, in most cases, “students wrote almost three times as much they would usually write in their face-to-face or online classes” (T9, T15, T16). Respondents reported that they frequently came across error-free answers in online examinations. However, they also said it was difficult to establish cheating since they could find no concrete evidence.

More than 80% of teacher participants reported that they thought many students were using assignment-writing providers on social media platforms such as Instagram or WhatsApp. It is not difficult to find such groups because they advertise widely, and it is believed their services are popular with students. This is in accordance with the findings of Quality Assurance Agency (2017) and Gamage, Silva, & Gunawardhana, (2020). Such unethical practices not only compromise the academic integrity of exams but also damage the reputation of educational institutions and create incompetent students who are ill-equipped to handle the challenges of further higher education or the demands of future careers.

Around a quarter of teacher participants said that plagiarism would have a negative effect on the results of research that many academicians conduct. It is a matter of concern as to how the results of online pre- or post-testing used for a piece of research could be deemed reliable when there is a great deal of suspected plagiarism behind them.

A further challenge, mentioned by the teachers, concerns detecting and confirming student malpractices. Anti-plagiarism tools such as Turnitin have proved to be a failure in the present RT context. Turnitin, which stores previously submitted papers and essays in a database, checks if a particular piece of work has traces of a previously submitted work. If so, it flags it as duplicate content and provides a highlighted summary of similarities. However, it “cannot determine when content has been translated from one language to another” (T7, T14, T24, T25, T36). For example, where many teachers firmly believe that students’ essays contain evidence of having been translated, Turnitin failed to flag the contents as plagiarized. Many students recognize this shortcoming and use it to their advantage.

In informal conversations, participants revealed that they experienced students attempting to exploit teleconferencing platforms by inducing ‘technical issues’ during remote one-on-one oral examinations. Individuals apparently sought to control the placement of cameras, so they were off-screen, not clearly or not wholly visible – an attempt to obfuscate the identity of the student actually being assessed, or to conceal the access of computers, mobile devices, or other prohibited aids.

Finally, acceptance and grading of work that is suspected of being plagiarized increases the unreliability of test results. Some teachers will strictly follow marking criteria and grade accordingly, irrespective of the fact that there is a good chance the work being graded is plagiarized. On the other hand, “some markers look at work with a very critical eye and award marks accordingly” (T1, T13). Disparities may be occurring in part due to the lack of a uniform marking policy regarding plagiarized content.

Strategies to Deal with Challenges in Remote Assessment

Respondents suggested a variety of plans or strategies to help maintain academic integrity in remote assessment. The vast majority wanted modifications to current formative and summative assessment practices and proposed that certain technical, personnel, and policy issues be addressed to cope with the challenges. The paper will address them in the discussion on formative and summative assessment.

Formative assessment – classroom practices

Though summative and formative assessment are both extremely important, teachers see formative assessment in a remote teaching context as somewhat more so. This is mainly because the entirety of student learning takes place outside the classroom, and teachers put a premium on being able to regularly and accurately gauge how much course content is actually being absorbed by students. Therefore, respondents believed that ‘best practices’ in face-to-face assessment should be applied to online environments. During remote teaching, many institutions started administering formative assessments both synchronously and asynchronously. For example, in synchronous sessions, teachers could use formal procedures such as conducting quizzes or assignments, or informal procedures such as observing student participation and engagement in various activities. Regardless of the activities and tasks are given to students for assessment, teachers should make sure that formative assessments are valid, well-timed, constructive, and specific to the needs of students.

Respondents also said that there should be “more emphasis on performance-based assessment and less on product-oriented assessment” (T4, T9, T27). Performance-based language assessment includes various types of activities such as oral and written assignments, open-ended responses, individual and group presentations, research projects, and other interactive tasks (Brown & Abeywickrama, 2019; Şenel & Şenel, 2021). It focuses on observing and judging the development of students’ learning as it occurs rather than on evaluating performance based on the delivery of some final product.

Some respondents suggested that academic integrity could be safeguarded through the use of alternative methods of assessment, opining that university and college authorities should encourage teachers to modify assessment practices according to contextual needs. This view is supported by Xu & Mahenthiran (2016) who believe that alternate forms of assessment help cultivate higher-order thinking and achieve pedagogical objectives. Teachers proposed, for example, “the introduction of open-book quizzes and exams” (T25, T31). They also suggested designing assignments that include open-ended problems that require higher-order thinking skills (HOTS such as analyzing, synthesizing, and creating) and avoiding direct questions that have only a single correct answer. Tasks and activities could be incorporated into classroom discussions, student reports (synchronous or asynchronous), poster presentations, and video presentations. To make assessment more interactive and less static, students could be assessed by spontaneous questioning during their presentations. They could even be prompted to provide feedback on other students’ work; students who participate fully and enthusiastically in these processes should be rewarded appropriately.

Many respondents believed that performance-based assessments, though time-consuming, work better in remote teaching contexts and are more valuable for judging student achievement. For example, they suggested that “students would gain a better understanding of subject matter through group learning activities (i.e., small-scale projects, creative writing challenges)” (T7, T12, T17) as they encourage students to interact more with peers and teachers. Project-based learning could be used in asynchronous activities in which students could be assessed according to how well they prepare, record, and share a presentation with their teachers. Alternatively, students could be asked to present in synchronous sessions. Regardless, in projects or assignments involving multiple steps, students could be asked to present or report on projects at every stage.

Oral questioning is a part of formative assessment procedures in many educational institutions and is usually practiced in a face-to-face context. In the context of remote teaching, to make up for the lack of direct observation of students, a majority of respondents mentioned that teachers should check individual students’ understanding of certain concepts through oral questioning. This could be done by asking direct questions about different conceptual aspects of assigned reports or projects. It is also important to ask questions that allow students to answer in ways that demonstrate an ability to think critically and use logical reasoning, as well as permit teachers to appreciate that students are the actual authors of the work they are presenting.

Alternate assessment practices suggested by participants included peer and self-assessment. As defined by Welch (2020), self-assessment is an opportunity for students to evaluate their own performance in a course. Peer assessment is a method of grading where students evaluate peers’ assignments based on guidelines provided by the teacher. Teachers would provide students with rubrics – one that is clear, unambiguous, and easily applied – and ask them to use them to assess either themselves or their peers. A thorough evaluation would not only help students see if they or others have met the stated criteria and outcomes, but it also provides a sense of ‘realness’ about their understanding, progress, and the outcomes they are striving for. This perspective of respondents aligns with the opinion of Welch (2020) who believes that such rubrics provide opportunities for students to understand what they should focus on and help them become autonomous learners as they get a chance to reflect, set objectives, and take control of their language learning experience.

Some respondents favored using ipsative assessment, defined as “a mode of assessment in which the assessed individual is compared to him-or herself either in the same field through time or in comparison with other fields” (Isaacs, Zara, Herbert, Coombs, & Smith, 2013). In practice, teachers are expected to track the progress of individual students by comparing current performance, using scores, against previous performance. Zhou & Zhang (2017) also support the idea of encouraging teachers to give more feedback through ipsative assessment to help students improve their performance. It is imperative that teachers closely watch to ensure that student progress occurs gradually – unexpected dramatic improvement being a sign of possible malpractice.

Seventy-five percent of respondents suggested that students maintain e-portfolios as a method of formative assessment that might help assess progress and performance. They recommended that e-portfolios include writing drafts, written feedback from teachers, checklists, graphic organizers, model essays, quizzes, etc.

Respondents strongly emphasized the need for more authentic assessment tasks and tools based on learning needs and performance so they could better evaluate students’ command over the subject matter.

Formative and Summative Assessment – Quizzes/Examinations

Design of examinations/quizzes/assignments

With regard to improving online examination and formative and summative assessment procedures, teachers came up with the following suggestions for helping ensure academic integrity:

a. Design examinations and quizzes in such a way that students can see only one question per page (an option available in most LMSs) and are given only one chance to answer.

b. Randomize questions so that individual students see different questions at the same time. There should also be several versions of a test in which teachers could change the order of questions or answer options, for instance, in multiple-choice questions. This would make it impractical for students to copy, take pictures of, or otherwise distribute and share questions with classmates.

c. Create varieties of question types, based on the proficiency level of students in online examinations, to make them more challenging. It is possible with most of the available LMSs where multiple-choice questions, matching questions, fill-in-the-blank questions, dropdowns, true/false questions, short answer, and essay questions can be set up easily.

d. Use of more subjective-type (e.g., short answer) questions than objective-type questions (e.g., fill-in-the-blanks, MCQs, etc.) for students of advanced levels as compared to pre-elementary and elementary level students. This inclusion would solve the plagiarism issue to a certain extent and offer more of a challenge to advanced-level students. This opinion is in contrast to the findings of Alghammas (2020) in which the respondents preferred objective questions to subjective questions.

e. Set the duration of online tests so students do not have an overabundance of time. Teachers feel that students have too much time to complete tests, which they tend to fill by consulting one another for answers. This opinion of the participants conforms with the one advised by Tuah & Naing (2021). They propose having short multiple examination sessions, lasting for about 30 minutes, as this would prevent students from consulting and sharing answers with one another.

f. Use a ‘safe exam browser’ option to prevent students from opening other web pages during exams.

Conduct of examinations

This section will look at the teachers’ ideas related to the conduct of examinations to minimize student malpractice and to improve academic integrity of online assessment.

1. Mechanism and logistics of conducting online examinations

a. Students should be given instructions very clearly beforehand, and mock online tests should be conducted to make sure that they are familiar with how to comply with the given rules and instructions.

b. Invigilating large groups are difficult because of the need to monitor too many cameras at once. To give them a better chance of identifying and dealing with malpractice, invigilators should have no more than 10 students at a time.

c. Multiple logins at the time of examinations should be disabled – administrators should ensure that the IP addresses of all devices used for both the LMS, and video conferencing platforms are the same. Students should be permitted to log in only with their institutional email IDs and from the same IP address, and IP addresses should be recorded and matched to all online answer forms.

d. During oral examinations or presentations, students must be required to sit at an appropriate distance from their laptops/computers/mobile phones so that the students are completely visible to the examiners. This helps teachers see that students are not using their devices to cheat. Students should also be instructed beforehand to have earphones connected to their devices to enable them to listen better.

e. Where students are suspected of maliciously exaggerating technical issues to try to gain some advantage, they should be required, while observing the appropriate COVID-19 protocols, to come to the university or college campus to take the exam.

2. Use of apt monitoring tools

Regarding possible strategies for monitoring students during online examinations, respondents suggested the use of online proctoring tools such as Proctorio, ProctorU, Kryterion, Respondus, etc. Tromblay (2020) favors online proctored exams as they are usually timed exams and use proctoring software which monitors students’ desktop, webcam, video, and audio during assessment activities. Such proctored exams verify the authenticity of a student’s identity to ensure and preserve the integrity of an exam and test-taking environment.

Participants suggested “the use of a ‘safe exam browser’ in conjunction with a webcam: both are required for effective online proctoring” (T17, T29). The webcam sees everything a student does, recording suspicious eye and body movements, possible cheating, etc. during the time of the examination. A safe exam browser prevents students from opening other web pages during the exam (Hussein, Yusuf, Deb, Fong, & Naidu, 2020). This is also called “computer or browser lockdown” (Alessio, Malay, Maurer, Bailer, & Rubin, 2017). Hussein et al. (2020) found Proctorio a viable option in a study conducted at the University of South Pacific. Apparently, the system was able to conduct assessments even at low internet speeds and “online proctoring could easily be integrated into Moodle without any additional infrastructure” (Hussein et al, 2020, p.520). The study also suggests online proctoring procedures should be followed university-wide for uniformity.

Respondents believed that institutions should do more to identify potential proctoring systems on the web and make them available for testing and evaluation.

One of the participants (T33) referred to a certain YouTuber’s (link given at the end of the references) method of monitoring online exams and reducing cheating. He talks about creating a test, for example, on a Google doc using different strategies such as images (for example, using a snipping tool on a personal computer) rather than typing questions as the images will prevent the student from copying the text and searching for the answer online. If students have a habit of copying questions to find answers on the internet, using images instead of text may help prevent this.

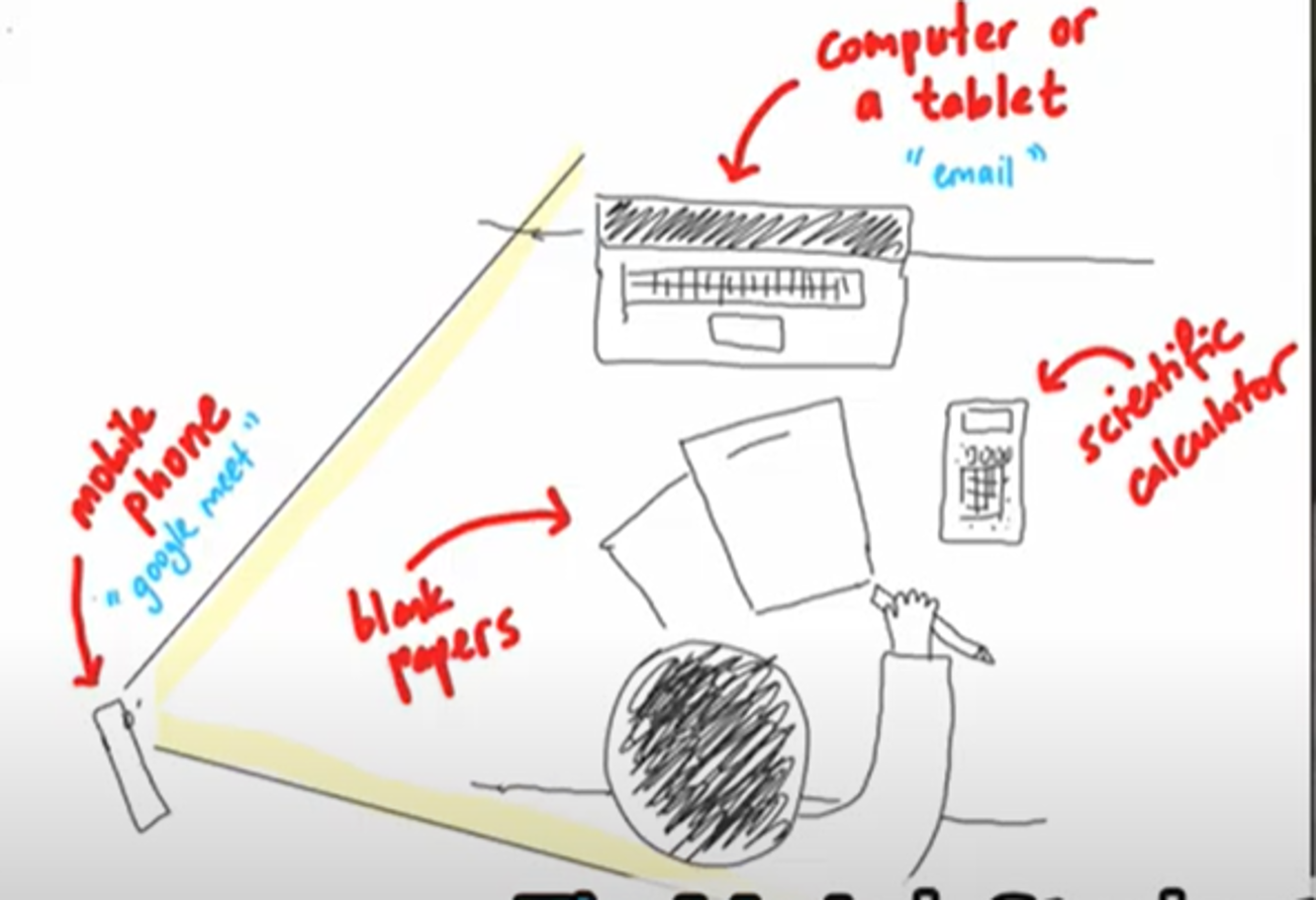

Another possible approach to monitoring suggested by the YouTuber involves creating online tests using Google forms or Google docs using a certain color. If a student attempts to access other web pages in the middle of the test, the sudden change in color scheme would immediately draw the teacher’s attention. In this scenario, students would use two devices – a laptop or pc to do the exam and a mobile phone – and position their phone camera in such a way that their face and workstation should be visible to invigilators. Students should also keep their microphones on throughout the test. The setup is illustrated in Figure 1 below.

Image snipped from https://www.youtube.com/watch?v=PGSraIonQms

Figure 1. Device Set-up

Tuah & Naing (2021) have suggested a similar arrangement for conducting online examinations. Piloting mock exams beforehand is essential for both students and teachers, so everyone is clear about how the system is supposed to work. Though this idea seems pretty simple and effective, it might be difficult for some students to afford multiple devices. Or even if they could afford them, they might not be willing to use two devices and could come up with excuses to avoid having to provide them.

Evaluation of examinations

A majority of participants believed that evaluating examinations would be less problematic if all stakeholders were first able to reach a consensus about the design, layout, and conduct of online examinations. This would not only require administrators to create a host of new rules and guidelines, but they also need to enforce them: faculty members must abide by agreed standards and not be permitted to deviate from them by using their own subjective grading methods.

Policy level suggestions

Respondents expressed concern that many of their suggestions or recommendations might prove futile unless they were addressed and incorporated at policy level. Therefore, many came up with the following policy-related suggestions for improving academic integrity and streamlining online assessment:

a. Favoring formative assessment. Though teachers were somewhat divided in their opinions about what weightage should be given to formative and summative assessment, the majority believed that giving formative assessment more weightage would help minimize discrepancies between classroom and test performances. Around 20% of teachers believed that formative assessment alone was sufficient to evaluate students.

b. Regular plagiarism and academic integrity awareness programs. Students need to be more mindful of plagiarism and academic integrity policies. Institutions’ guidelines vary and some teachers have their own ideas about how to handle non-compliance – this suggests the need for a committee or council in each department to deal with such issues swiftly and consistently. Harsh penalties should apply to students who are proven to be cheaters. Institutions should also be ready to conduct re-sit examinations (online or physical) for students who deny that they have not plagiarized when there is strong evidence to the contrary.

c. Repercussions for students who do not follow reasonable directions from teachers. Cameras, microphones, laptops, etc. are new additions to the examination setup, and amendments are needed at the policy level to ensure their fair use. For example, students who refuse to turn on their cameras or otherwise use their technology in a way essential for the exam to be conducted should be warned that continued defiance will result in failing that exam.

d. There should be specific and well-defined policies about the provision and use of technology which should address the following:

I. It is the responsibility of institutions to provide effective monitoring tools to be incorporated in assessment procedures.

II. There should be a uniform rule as to how many devices a student can use during the examinations. If possible, the IP addresses of devices should be recorded.

e. Teachers require adequate and appropriate training before they can be expected to use modern technology for assessment.

Despite the above, there are certain issues in online/remote teaching that need a little more effort on the part of different stakeholders:

a. Though institutions show a willingness to buy proctoring software to counteract plagiarism issues, institution resources could be a matter of concern to the management. Online proctoring platforms and the technical support needed for implementation and the required training for teachers also could be a cause for concern as it may require huge resource allocation.

b. Professional development sessions should be conducted from time to time to keep the faculty abreast of the current assessment practices and the use of new technological tools.

c. It is not realistic to require teachers to create online quizzes and exams that require a high degree of technical expertise.

d. Teachers cannot be expected, without appropriate assistance, to take responsibility for ensuring there is no student malpractice during online assessment.

e. Some students may legitimately face internet availability, connectivity, and affordability issues.

Hence, all stakeholders must work together to deal with all these challenges. The findings of this study are generalizable and transferable to many other EFL contexts as well since the Covid-19 pandemic has posed similar challenges in remote assessment across the world.

Implications and Suggestions for Future Research

The findings of this study could help inform the priorities, policies, and practices of educational institutions to deal with the issue more effectively.

This study may be replicated in a different context with a larger sample. Moreover, subtopics emerging from the study including using project-based assessment, ipsative assessment, proctoring tools, etc. could be taken into consideration for further research with regard to their efficacy in online assessment.

The scope of the study could be extended to other courses and disciplines to ascertain its value and/or to discover more innovative solutions.

Conclusion

It is clear that COVID-19 has severely impacted the way educational institutions operate; the findings of this study help demonstrate the degree to which trial and error were used to try to cope with such a sudden disruption to normalcy. Despite the problems and possible solutions mentioned in this work, researchers believe that a proper design, implementation, and monitoring of online learning assessment can enhance the reliability of assessment and may help students in achieving their desired learning targets and outcomes. Different methods should be tried and tested to evaluate their impact on the quality of assessment and students’ learning outcomes. Regardless of the implementation procedures followed by different educational institutions, it is imperative for everyone to come up with more efficient ways to tackle the issue of academic dishonesty and improve the quality and reliability of online exams to safeguard academic integrity and, above all, to help make the current and future generation of students better.

Having said that, more research should be conducted in this area to investigate the possibilities of facilitating all stakeholders in online assessment as this remote assessment is likely to continue for a prolonged period of time. Colleges and universities should be ready to take rigorous assessment monitoring measures to bring out quality output in the form of students.

It is noteworthy that instead of continuing with trial-and-error methods with regard to teaching and assessment, a thorough and proactive approach would serve the institutions better. It would save educational institutions from wasting money and resources, creating uncertainty, causing further disruption, having to deal with unforeseen problems, etc. It is also advisable to benefit from the worthy solutions discovered by other educational bodies. One should remember that some issues could be sorted out completely with concerted efforts from stakeholders, while some others could only be minimized, but these challenges cannot be done away with altogether.

Acknowledgement

We would like to thank The Research Council of Oman for funding this project. We appreciate the University of Technology and Applied Sciences, Nizwa, Oman, for their cooperation in conducting this research.

Funding Details

The research project was funded by The Research Council (Grant number: BFP/RGP/2020/3) of Oman.

About the Authors

Surya Subrahmanyam Vellanki is currently working as a lecturer in English at the University of Technology and Applied Sciences, Nizwa, Oman. He received his Ph.D. in English from India in 2004 and Cambridge DELTA in 2016. He has over 20 years of teaching experience and his academic interests are language learning in technology enhanced and digital environments, English for specific/academic purposes, learner autonomy, learning strategies and learner styles. ORCID ID: 0000-0002-0877-652X

Saadat Mond is working at the University of Technology and Applied Sciences, Nizwa, Oman. He received M.A. in English Linguistics (1997) and M.A. in Education and International Development (2005) from Karachi University and University of London respectively. He has more than 20 years of teaching experience and his academic interests are second language acquisition, reading literacy development, language planning, learning strategies in receptive skills and technology assisted learning. ORCID ID: 0000-0002-0780-4306

Zahid Kamran Khan is presently working at the University of Technology and Applied Sciences, Nizwa, Oman. He received M.A. in English Language (1994) from Islamia University, Bahawalpur, and M.Phil. in English Linguistics (2009) from B Z University, Multan. He has more than 25 years of teaching experience and his academic interests are discourse analysis, second language acquisition, curriculum development, and technology enhanced language learning. ORCID ID: 0000-0003-2236-0309

To Cite this Article

Vellanki, S. S., Mond, S. & Khan, Z. K. (2023). Promoting academic integrity in remote/online assessment – EFL teachers’ perspectives. Teaching English as a Second Language Electronic Journal (TESL-EJ), 26 (4). https://doi.org/10.55593/ej.26104a7

References

Abduh, M. Y. M. (2021). Full-time online assessment during COVID-19 lockdown: EFL teachers’ perceptions. Asian EFL Journal, 28(1.1), 26-46. https://tinyurl.com/AbduhMYM-2021

Alessio, H. M., Malay, N., Maurer, K., Bailer, A. J., & Rubin, B. (2017). Examining the effect of proctoring on online test scores. Online Learning, 21(1), 146–161. https://doi.org/10.24059/olj.v21i1.885

Alghammas, A. (2020). Online language assessment during the COVID-19 pandemic: University faculty members’ perceptions and practices. Asian EFL Journal, 27(44), 169-195. https://www.asian-efl-journal.com/monthly-editions-new/2020-monthly-editions/volume-27-issue-4-4-october-2020/index.htm

Baleni, G.Z. (2015). Online formative assessment in higher education: Its pros and cons. The Electronic Journal of e-Learning, 13(4), 228-236. https://files.eric.ed.gov/fulltext/EJ1062122.pdf

Beckman, T., Lam, H., Khare, A. (2017). Learning assessment must change in a world of digital “Cheats”. In A. Khare, B. Stewart, R. Schatz (eds.). Phantom Ex Machina. https://doi.org/10.1007/978-3-319-44468-0_14

Black, P., & Wiliam, D. (2009). Developing the theory of formative assessment. Educational Assessment, Evaluation and Accountability, 21(1), 5-31. https://doi.org/10.1007/s11092-008-9068-5

Brown, D. H., & Abeywickrama, P. (2019). Language assessment: Principles and classroom practices (2nd ed.). Pearson Education ESL.

Braun, V., & Clarke, V. (2006). Using thematic analysis in psychology. Qualitative Research in Psychology, 3(2), 77–101. https://doi.org/10.1191/1478088706qp063oa

Burgess, S. & Sievertsen, H. (2020). Schools, skills, and learning: The impact of COVID-19 on education. https://voxeu.org/article/impact-COVID-19-education

Creswell, J. W. (2012). Qualitative inquiry & research design: Choosing among five approaches (4th ed.). SAGE.

Creswell, J. W., & Creswell, D. J. (2018). Research design: Qualitative, quantitative, and mixed methods approaches (5th ed.). SAGE Publications, Inc.

Creswell, J.W. and Plano Clark, V.L. (2011). Designing and conducting mixed methods research. (2nd ed.), SAGE.

Fishman, T. (2009, September 28–30). “We know it when we see it” is not good enough: Toward a standard definition of plagiarism that transcends theft, fraud, and copyright. In Proceedings of the Fourth Asia Pacific conference on educational integrity (4APCEI), University of Wollongong, NSW, Australia. http://www.bmartin.cc/pubs/09-4apcei/4apcei-Fishman.pdf

Foltýnek, T., Meuschke, N., Gipp, B. (2020) Academic plagiarism detection. ACM Computing Surveys (CSUR), 52(6):1–42. https://doi.org/10.1145/3345317

Gabriel, T. (2010, August 2). Lines on plagiarism blur for students in the digital age. The New York Times. https://www.nytimes.com/2010/08/02/education/02cheat.html

Gamage, K. A. A., Silva, E. K. de, & Gunawardhana, N. (2020). Online delivery and assessment during COVID-19: Safeguarding academic integrity. Education Sciences, 10(11), 301. doi:10.3390/educsci10110301

Hodgkinson, T., Curtis, H., MacAlister, D., & Farrell, G. (2015). Student academic dishonesty: The potential for situational prevention. Journal of Criminal Justice Education, 27(1), 1–18. https://doi.org/10.1080/10511253.2015.1064982

Hussein, M. J., Yusuf, J., Deb, A. S., Fong, L., & Naidu, S. (2020). An evaluation of online proctoring tools. Open Praxis, 12(4), 509. https://doi.org/10.5944/openpraxis.12.4.1113

Isaacs, T., Zara, C., Herbert, G., Coombs, S. J., & Smith, C. (2013). Key concepts in educational assessment (SAGE Key Concepts series) (1st ed.). SAGE Publications Ltd.

Johnson, G. M., & Cooke, A. (2016). An ecological model of student interaction in online learning environments. In L. Kyei-Blankson, J. Blankson, E. Ntuli, & C. Agyeman (Ed.), Handbook of research on strategic management of interaction, presence, and participation in online courses (pp. 1-28). IGI Global. http://doi:10.4018/978-1-4666-9582-5.ch001

JISC (Joint Information Systems Committee). (2007). Effective practice with e-assessment: An overview of technologies, policies and practice in further and higher education.

http://www.jisc.ac.uk/media/documents/themes/elearning/effpraceassess.pdf

Looney, J. (Ed.). (2005). Formative assessment: Improving learning in secondary classrooms. Organization for Economic Cooperation and Development.

MathMathX. (2020). Monitoring online exams and strategies to reduce cheating. https://www.youtube.com/watch?v=PGSraIonQms

Quality Assurance Agency. (2017) Contracting to cheat in higher education: How to address contract cheating, the use of third-party services and essay mills. https://www.qaa.ac.uk.docs/qaa/guidance/contracting-to-cheat-in-higher-education-2nd-edition.pdf

Richards, J. C., & Schmidt, R. W. (2010). Longman dictionary of language teaching and applied linguistics. Longman.

Roostaee, M., Sadreddini, M. H., & Fakhrahmad, S. M. (2020). An effective approach to candidate retrieval for cross-language plagiarism detection: a fusion of conceptual and keyword-based schemes. Information Processing and Management, 57(2), 102150. https://doi.org/10.1016/j.ipm.2019.102150

Sakamoto, D., Tsuda, K. (2019) A detection method for plagiarism reports of students. Procedia Computer Science, 159:1329–1338. https://doi.org/10.1016/j.procs.2019.09.303

Şenel, S., & Şenel, H. C. (2021). Remote assessment in higher education during COVID-19 pandemic. International Journal of Assessment Tools in Education, 181–199. https://doi.org/10.21449/ijate.820140

Swain, J. M., & Spire, Z. D. (2020). The role of informal conversations in generating data, and the ethical and methodological issues. Forum Qualitative Sozialforschung / Forum: Qualitative Social Research, 21(1). https://doi.org/10.17169/fqs-21.1.3344

Tromblay, D. (2020). How online exam proctoring and remote exams work. WebCE. https://www.webce.com/news/2020/07/14/how-online-exam-proctoring-and-remote-exams-work

Tuah, N. A. A., & Naing, L. (2020). Is online assessment in higher education institutions during COVID-19 pandemic reliable? Siriraj Medical Journal, 73(1), 61–68. https://doi.org/10.33192/Smj.2021.09

Welch, K. (2020, December 14). Empowering remote learners through self-assessment. Cambridge University Press. https://www.cambridge.org/elt/blog/2020/12/14/empowering-remote-learners-self-assessment/

Xu, H., & Mahenthiran, S. (2016). Factors that influence online learning assessment and satisfaction: Using Moodle as a learning management system. International Business Research, 9(2), 1. https://doi.org/10.5539/ibr.v9n2p1

Yulianto, D., & Mujtahin, N. M. (2021). Online assessment during COVID-19 pandemic: EFL teachers’ perspectives and their practices. Journal of English teaching, 7(2), 229–242. https://doi.org/10.33541/jet.v7i2.2770

Zhou, J., & Zhang, J. (2017). Using ipsative assessment to enhance first-year undergraduate self-regulation in Chinese college English classrooms. In: Hughes, G. (eds.) Ipsative assessment and personal learning gain, (43-64). Palgrave Macmillan. https://doi.org/10.1057/978-1-137-56502-0_3

| Copyright of articles rests with the authors. Please cite TESL-EJ appropriately. Editor’s Note: The HTML version contains no page numbers. Please use the PDF version of this article for citations. |